Seizures are a common condition, experienced by around 10% of people in their lifetime, and while in some cases epileptic seizures may be the consequence of a particular brain insult, a significant proportion have an ongoing susceptibility to epilepsy, rather parsimoniously defined as having had at least two unprovoked epileptic seizures. The distinction between a single unprovoked seizure and epilepsy is somewhat moot, since around 40% of those who have such an event will have another within two years if left untreated.

Perhaps unhelpful, or harmful, would be a better description than moot. Seizures are not a trivial condition; quite apart from the shocking nature of the event and the restrictions that it imposes upon lifestyle, such as the legal entitlement to drive a car, they are associated with a small but real mortality risk. While this is more likely in those who have frequent seizures, those who have had a single seizure are not immune.

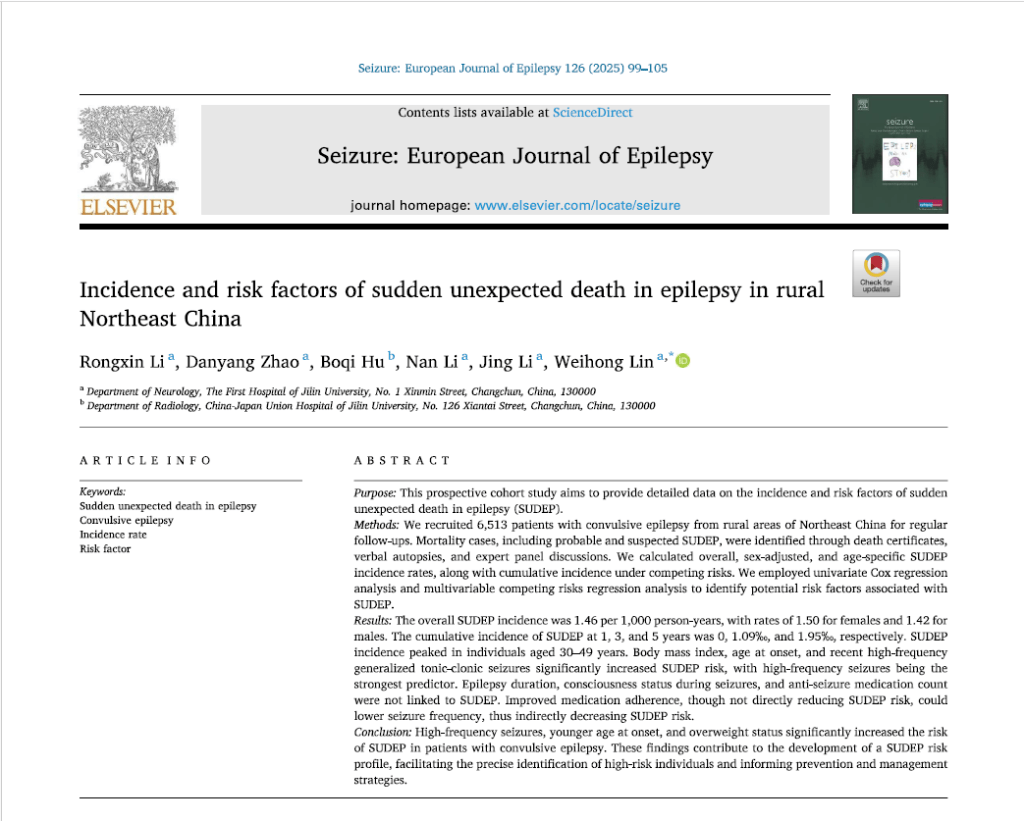

The mortality asociated with epilepsy is termed sudden unexpected death in epilepsy (SUDEP) and was defined originally by Nashef as:

“a sudden, unexpected, witnessed or unwitnessed, non-traumatic, and non-drowning death in an individual with epilepsy, occurring during benign circumstances, with or without evidence of a seizure, excluding documented status epilepticus, where postmortem examination does not reveal a cause of death.”

Note that SUDEP is not about experiencing a seizure in a dangerous environment in which to lose consciousness or control, or even necessarily due to having a seizure at the time of death, though this is generally assumed.

Individuals who have epilepsy are often young and otherwise well and so SUDEP is obviously an extremely emotive topic, both for sufferers and doctors. There are cases where neurologists have been sued for not trying hard enough to make their patients compliant with medication and not informing them of the risk of SUDEP.

But how do we inform them of the risk if we do not know? In developed countries the risk in studies varies from 0.35 to 9.3 /1000 patients with convulsive epilepsy per year – a thirty fold variation. Such a wildly varying estimates makes a meta-analysis proclaiming that they average out at 1.2/1000 practically useless.

It remains unclear if the inaccuracy of SUDEP risk is because of varying definition, underreporting, no post mortem to prove that there is no other cause, population differences or a combination of factors. The studies that have been conducted have usually been on an uncontrolled sample and retrospective.

As well as informing patients of the risk, if one understands the level of risk, one might ascertain risk factors and so be able to mitigate them by medical intervention or education.

Again the findings are inconsistent between studies and range from male sex, suffering generalised tonic clonic seizures and poor medical complaince to seizure frequency, young age of onset, sleeping alone or being on multiple antiepileptic drugs.

The topic of this journal club is a large cohort long term prospective study published last year in Seizure: European Journal of Epilepsy by Rongxin Li et al, Incidence and risk factors of sudden unexpected death in epilepsy in rural Northeast China.

They recorded Nashef’s definition of definite, probable and possible SUDEP in a prospective epidemiological cohort of patients with convulsive epilepsy, conducting face to face interviews for epilepsy with monthly assessments of a median of 7 years. They had 6513 patients who had had a median duration of epilepsy of 32 years. 855 were lost to follow up. This meant there was a total of around 44,000 patient years. All were treated with valproate or phenobarbitone, or additional AEDs prescribed by a specialist.

The headline finding was 64 SUDEP deaths, which gives an annual incidence of 1.4/1000.

Regarding risk factors, they found the following statistically significant risk factors on univariate cox regression:

- Higher BMI p=0.0116

- Earlier age at onset p = 0.001

- Longer duration of epilepsy p = 0.007

- Higher generalised seizure frequency in last month or last year p=0.001

There were in fact 9 times as many non SUDEP as SUDEP deaths in this cohort, so they could use these events as competing risks to reduce bias. Using multivariable competing risks regression on the factors that were significant on univariate analysis, they determined relative risks:

- Obesity had relative risk 1.06 (95% confidence interval:1.02 to 1.11)

- Later age of onset was a protective factor

- Increased seizure frequency in last month had relative risk 12.10 (95% CI 7.39-19.84)

- Increased seizure frequency last year had relative risk 11.56 95% CI 1.59-83.92)

Comments

It is worth putting the 1.4/1000 figure, which closely matches the consensus metanalysis figure of 1.2/1000, in the context of overall risk, as many patients may not grasp their significance. The risk of accidental death in the UK is 20,000 per year. Out of 68 million, this gives 1 in 3000, or 0.3/1000. So the risk of having epilepsy is four times as great. For further comparison, patients often want brain scans as they are worried they might have a brain tumour. Its incidence in the general population (and likely also in the population of people with chronic episodic headache) is 20 per 100,000 per year, so 1 in 5000, or 0.2/1000.

Regarding life time risk, that of death by RTA is 1 in 100, but the lifetime risk of SUDEP in a patient with epilepsy has been estimated at 7-12%. (This seems quite high; if a patient has epilepsy for as long as 50 years, this is 1.2 * 50 = 6%.)

The criteria for probable SUDEP are as follows: a history of epilepsy in the patient; sudden and unexpected death while in a healthy state; death occurring within minutes; death occurring during normal activities in benign circumstances; and no definitive cause of death identified upon clinical examination.

The criteria for possible SUDEP are as follows: the presence of a competing cause of death; no other significant underlying disease; death occurring in water but without complete submersion of the individual; and death occurring in a normal environment without an autopsy.

But, having explained all this, the study ignored it and lumped all possible and probable cases with definite cases because they had no post mortem data or examinations at death. This is a real issue; in a previous study in Chalfont in 2013, 40% of the apparent SUDEP deaths were cardiovascular related and not epilepsy related. The death rate not from SUDEP in the study’s population was quite high, increasing the possibility of uncertainty over cause.

In addition, the study explained that there was no correlation with AED adherence, but they only asked at assessments. One would have to question if patients recruited in a single cohort followed every month for many years would be reliable in retrospectively reporting their compliance. Other studies have recorded AED drug levels at autopsy and have indeed found a correlation, as might be supposed.

While the study might overestimate risks somewhat because of lack of post-mortem data, the prospective data ascertainment would seem to give a reliable figure. Moreover, the monthly assessments could give good data on seizure frequency, which was found to be the major risk factor for SUDEP.

Finally, as the study points out, it was conducted in a single rural region of likely uniform population and ethnicity. This might be somewhat differnt from the population in other countries.

Conclusion

The study gives more confidence in citing the figure of around 1.2/1000 per year for the risk of SUDEP. Of particular interest was the major risk factor of increased seizure frequency; in clinical practice, if a patient reports increased generalised tonic clonic seizure frequency, they should be assessed as a matter of some urgency rather than waiting for routine follow up, which in the UK may be six months or a year.

Background

Background